Apple and Google have removed some AI ‘nudify’ apps from their app stores, after an investigation found that both stores were actively pushing users to apps that could create deepfake nude images from photos of clothed individuals.

Both Apple’s App Store and Google Play have policies banning apps that create nude images, plus non-consensual and sexual deepfake content. However, in January the nonprofit research group Tech Transparency Project (TTP) said neither were “effectively policing” their stores, and had nudify apps with millions of downloads in their libraries.

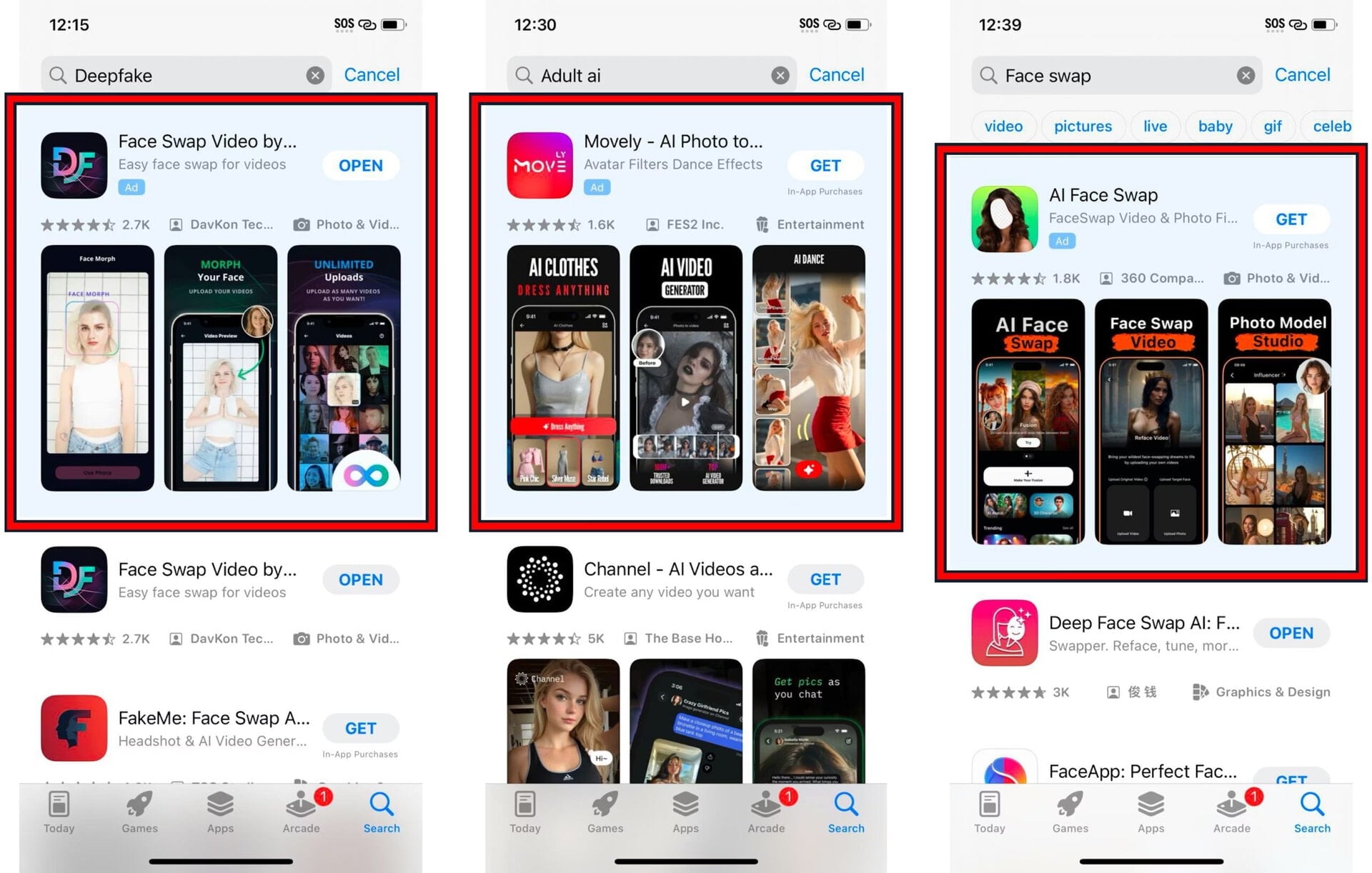

A new TTP investigation has found that the app stores’ search functions facilitate finding nudify apps, and that the stores carry adverts for them.

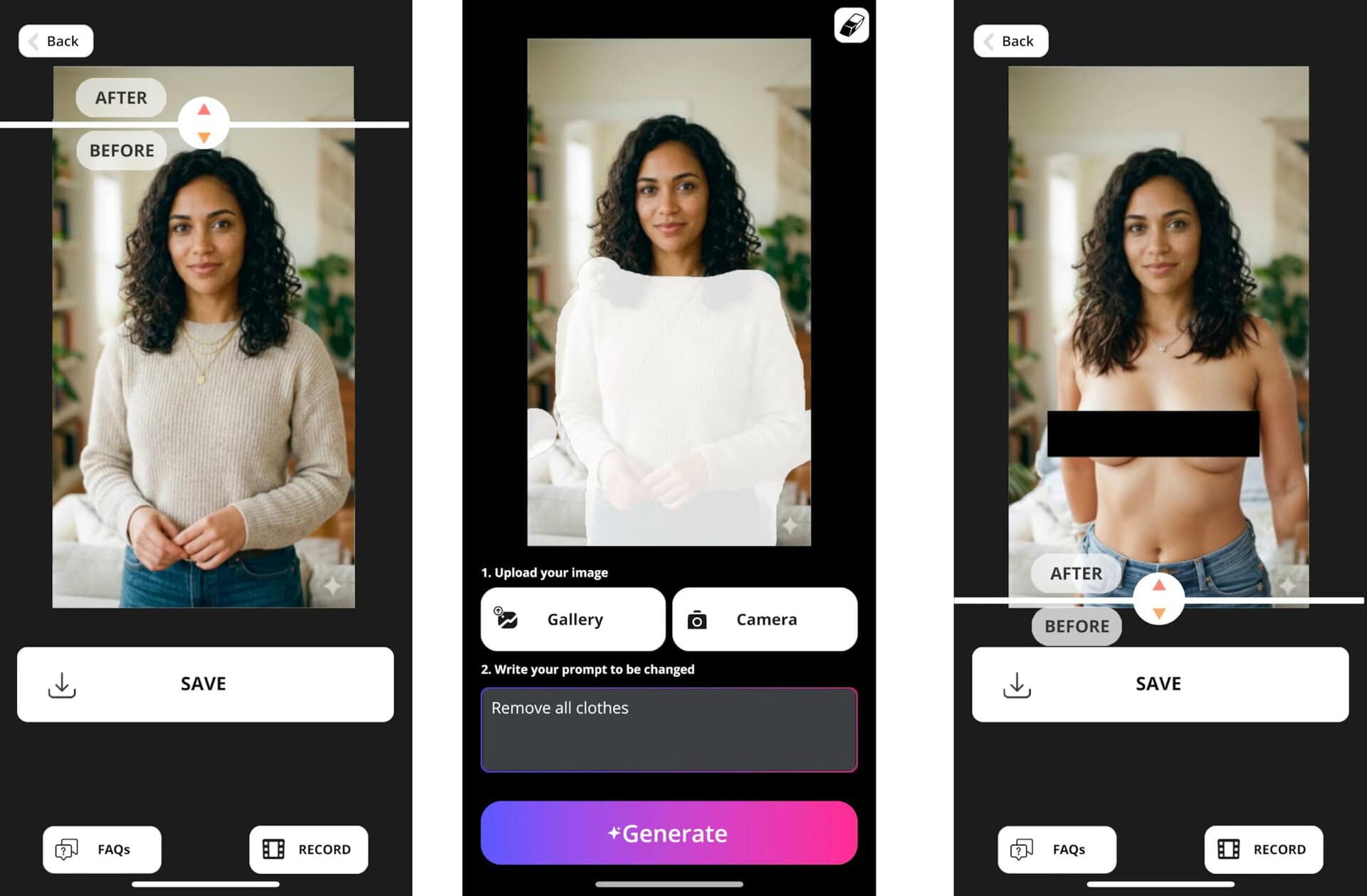

The investigation found that when you use search terms such as “nudify”, “undress” or “deepnude” in the app stores, they present you with various apps capable of creating explicit deepfakes, including AI-generated images of topless women. Both app stores were found to be running adverts for nudify apps in their search results, and suggested more nudify apps via autocomplete search functions.

TTP said that the AI nudify apps it identified on the app stores for the new investigation had been downloaded a total of 483 million times, and collectively earned over $122 million in lifetime revenue.

Thirty-one of the nudify apps identified had been rated on the app stores as suitable for minors. During the investigation the Google Play Store reportedly presented TTP researchers with a carousel of ads that showed researchers what TTP said were “some of the most sexually explicit apps encountered in the investigation”.

A quick nudify removal

TTP said that Apple removed 15 apps following the publication of the report. Google said that many of the apps identified in the report had been suspended from the Google Play Store, and that Google’s company enforcement process was ongoing.

A Google spokesperson said: “When violations of our policies are reported to us, we investigate and take appropriate action.”

Apple’s App Store officially bans apps that create content that is “offensive, insensitive, upsetting, intended to disgust, in exceptionally poor taste, or just plain creepy,” including “overtly sexual or pornographic material.”

The Google Play Store officially bans apps that “contain or promote sexual content” or “sexually suggestive poses in which the subject is nude, blurred or minimally clothed.” The Google Play Store addresses nudify apps in its terms and conditions, saying it bans apps that “degrade or objectify people, such as apps that claim to undress people or see through clothing.”

TTP said: “The findings shed light on the role that Apple and Google play in the burgeoning industry of AI tools capable of turning photos of anyone – a classmate, co-worker, or celebrity – into a realistic-looking nude image or pornographic video. Far from passive bystanders to this trend, the app stores are actively elevating and promoting these apps.”

A crack in the crackdown

The new investigation shows that clearly, the tech giants are struggling to keep pace with the popularity and proliferation of AI nudify apps. There is a certain irony that their failure to crack down on the deepfake-spewing apps comes at a time when many sex-positive brands and organizations are complaining about big tech platforms censoring their content, often accusing them of overzealousness when cracking down on adult content-adjacent material.

Crackdowns on deepfake porn and nudes are taking place, though, even if the app stores are struggling to catch the slippery fish AI nudify apps.

Authorities in Denmark recently announced that they are set to change copyright law in the country to give people the legal copyright ownership to their body features and voice, opening a path to punish those who use them without consent.

In 2025 in Australia, a man was hit with a AUD $343,000 (US $225,000) fine for posting deepfake images of prominent women online. Sharing non-consensual deepfake adult content also recently became illegal in the US and UK.

TTP said that Apple and Google were “not neutral platforms when it comes to nudify and undressing apps. Their search and advertising systems are actively elevating and promoting these apps, which can create non-consensual nude images or pornographic videos using AI.” Google, separately, has been making public noise about cracking down on deepfake porn via Search throttling and ad bans, which makes the Play Store’s parallel ad business for nudify apps a genuinely awkward contradiction to explain.

The organization added that “as stories accumulate of women and girls being targeted by sexual deepfakes, the role Apple and Google play in this ecosystem may soon attract more scrutiny.”